Accelerate your AI Skills Today: A Million Dollar Job!

The skyrocketing salaries ($1m per year) of AI engineers is not a hype. It is the fact of current corporate world, where you will witness a shift that is inevitable.

We’ve already set our feet at the edge of the technological revolution. A revolution that is at the verge of altering the way we live and work. As the fact suggests, humanity has fundamentally developed human production in three revolutions, and we’re now entering the fourth revolution. In its scope, the fourth revolution projects a transformation that is unlike anything we humans have ever experienced.

- The first revolution had the world transformed from rural to urban

- the emergence of mass production in the second revolution

- third introduced the digital revolution

- The fourth industrial revolution is anxious to integrate technologies into our lives.

And all thanks to artificial intelligence (AI). An advanced technology that surrounds us, from virtual assistants to software that translates to self-driving cars.

The rise of AI at an exponential rate has disrupted almost every industry. So much so that AI is being rated as one-million-dollar profession.

Did this grab your attention? It did?

Now, what if we were to tell you that the salary compensation for AI experts has grown dramatically. AI and machine learning are fields that have a mountain of demand in the tech industry today but has sparse supply.

AI field is growing at a quicker pace and salaries are skyrocketing! Read it for yourself to know what AI experts, AI researchers and any other AI talent are commanding today.

- A top-class AI research laboratory, OpenAI says that techies in the AI field are projected to earn a salary compensation ranging between $300 to $500k for fresh graduates. However, expert professionals could earn anywhere up to $1m.

- Whopping salary package of above 100 million yen that amounts to $1m is being offered to AI geniuses by a Japanese firm, Start Today. A firm that operates a fashion shopping website named Zozotown.

Does this leave you with a question – Is this a right opportunity for you to jump in the field and make hay while the sun is shining?

And the answer to this question is – yes, it is the right opportunity for any developer seeking a role in the AI industry. It can be your chance to bridge the skill shortage in the AI field either by upskilling or reskilling yourself in the field of AI.

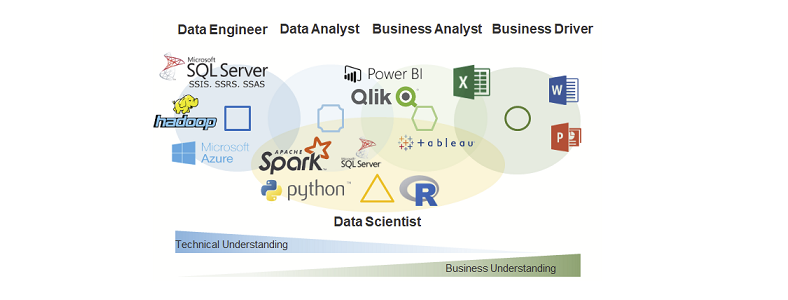

There are a wide varieties of roles available for an AI enthusiast like you. And certain areas are like AI Engineers and AI Researchers are high in demand, as there are not many professionals who have robust AI knowledge.

According to a job report, “The Future of Jobs 2018,” a prediction was made suggesting that machines and algorithms will create around 133 million new job roles by 2022.

AI and machine learning will dominate the tech world. The World Economic Forum says that several sectors have started embracing AI and machine learning to tackle challenges in certain fields such as advertising, supply chain, manufacturing, smart cities, drones, and cybersecurity.

Unraveling the AI realm

From chatbots to financial planners, AI is impacting the way businesses function on a day-today basis. AI makes the work simpler, as it provides variables, which makes the work more streamlined.

Alright! You know that

- the demand for AI professionals is rising exponentially and that there is just a trickle of supply

- the AI professionals are demanding skyrocketing salaries

However, beyond that how much more do you know about AI?

Considering the fact that our lives have already been touched by AI (think Alexa, and Siri), it is just a matter of time when AI will become an indispensable part of our lives.

As Gartner predicts that 2020 will be an important year for business growth in AI. Thus, it is possible to witness significant sparks for employment growth. Though AI predicts to diminish 1.8 million jobs, it is also said to replace it with 2.3 million jobs that will be created. As we look forward to stepping into 2020, AI-related job roles are set to make positive progress of achieving 2 million net-new employments by 2025.

With AI promising to score fat paychecks that would reach millions, AI experts are struggling to find new ways to pick up nouveau skills. However, one of the biggest impacts that affect the job market today is the scarcity of talent in this field.

The best way to stay relevant and employable in AI is probably by “reskilling,” and “upskilling.” And AI certifications is considered ideal for those in the current workforce.

Looking to upskill yourself – here’s how you can become an AI engineer today.

Top three ways to enhance your artificial intelligence career:

- Acquire skills in Statistics and Machine Learning: If you’re getting into the field of machine learning, it is crucial that you have in-depth knowledge of statistics. Statistics is considered a prerequisite to the ML field. Both the fields are tightly related. Machine learning models are created to make accurate predictions while statistical models do the job of interpreting the relationship between variables. Many ML techniques heavily rely on the theory obtained through statistics. Thus, having extensive knowledge in statistics help initiate the first step towards an AI career.

- Online certification programs in AI skills: Opting for AI certifications will boost your credibility amongst potential employers. Certifications will also enhance your earning potential and increase your marketability. If you’re looking for a change and to be a part of something impactful; join the AI bandwagon. The IT industry is growing at breakneck speed; it is now that businesses are realizing how important it is to hire professionals with certain skillsets. Specifically, those who are certified in AI are becoming sought after in the job market.

- Hands-on experience: There’s a vast difference in theory and practical knowledge. One needs to familiarize themselves with the latest tools and technologies used by the industry. This is possible only if the individual is willing to work on projects and build things from scratch.

Despite all the promises, AI does prove to be a threat to job holders, if they don’t upskill or reskill themselves. The upcoming AI revolution will definitely disrupt the way we work, however, it will leave room for humans to perform more creative jobs in the future corporate world.

So a word of advice is to be prepared and stay future ready.

Prof. Sven Buchholz hat eine Professur für die Fachgebiete Data Management und Data Mining am Fachbereich Informatik und Medien an der TH Brandenburg inne. Er ist wissenschaftlicher Leiter des an der Agentur für wissenschaftliche Weiterbildung und Wissenstransfer – AWW e. V. angesiedelten Projektes „Datenkompetenz 4.0 für eine digitale Arbeitswelt“ und Dozent des Vertiefungskurses „

Prof. Sven Buchholz hat eine Professur für die Fachgebiete Data Management und Data Mining am Fachbereich Informatik und Medien an der TH Brandenburg inne. Er ist wissenschaftlicher Leiter des an der Agentur für wissenschaftliche Weiterbildung und Wissenstransfer – AWW e. V. angesiedelten Projektes „Datenkompetenz 4.0 für eine digitale Arbeitswelt“ und Dozent des Vertiefungskurses „